During many of my presentations as well as during meetings with companies, the topic of quality comes up. As I stress the importance of speed, continuous integration and continuous deployment, a general unease settles over the group until someone brings up the topic of ensuring quality. Frequently this is followed by a couple of anecdotes about things that went horribly wrong at some customer. And then, finally, there are some people that bring up the topic of certification and use this as an excuse why none of the concepts apply to them as they are different.

During many of my presentations as well as during meetings with companies, the topic of quality comes up. As I stress the importance of speed, continuous integration and continuous deployment, a general unease settles over the group until someone brings up the topic of ensuring quality. Frequently this is followed by a couple of anecdotes about things that went horribly wrong at some customer. And then, finally, there are some people that bring up the topic of certification and use this as an excuse why none of the concepts apply to them as they are different.

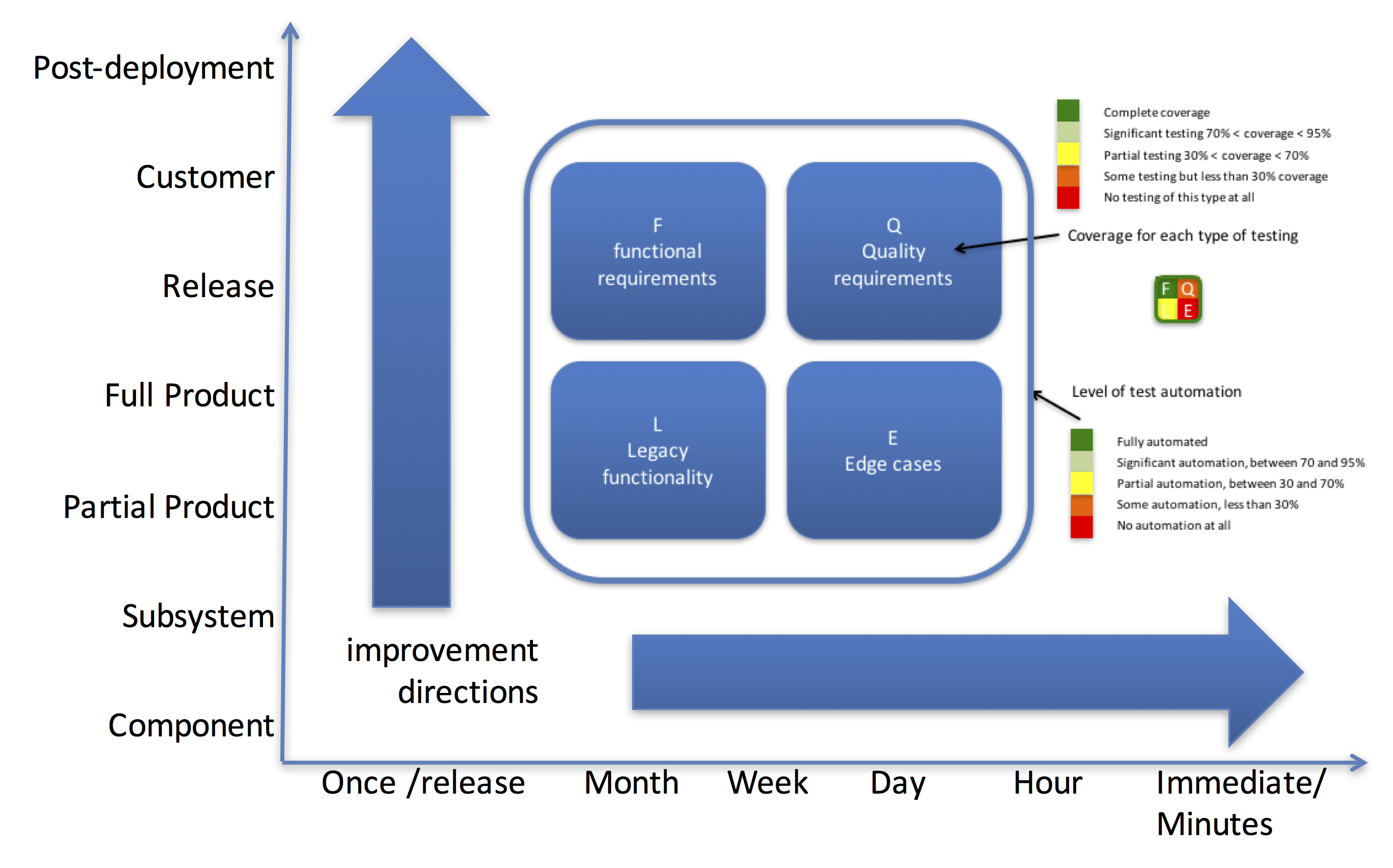

In my work on the CIVIT model (see figure below) as well as other models together with industrial PhD candidates, it has become more and more clear to me that continuous integration goes a long way towards addressing quality issues. The notion of always being at production level quality and never letting the quality drop allows for a different way of operating in software development. In many embedded systems companies, software is viewed as the problem child because it is always late and experiences quality issues. Of course, in most of those companies, senior leaders conveniently forget that the delays often started in mechanical design and hardware and that it is impossible for the software to be properly tested until the actual system is available. Software is expected to eat the delays of the other disciplines and then make sure that the delivery date is kept. That leads to quality issues as the quality assurance phase gets squeezed the most. With continuous integration, assuming sufficient access of the system, you can at least maintain the quality for the current feature set.

Even in embedded systems companies that are very good at continuous integration and potentially even continuous (or at least frequent) deployment, there still is a lingering culture where not a single issue is allowed to slip through to customers. In my experience, with a continuous integration environment in place, the equation actually changes: it becomes an economic decision to allocate a certain level of resources to pre-deployment testing. The organization can decide what an acceptable level of issues in the field is, considering the economic implications. Especially when a system can be deployed in a wide variety of different configurations, the goal of setting up testing environments for all configurations is an extremely costly ambition. This is not an artificial example – the companies that I work with typically have hundreds to millions of configurations for their successful systems.

A second challenge is that, typically, R&D departments feel that their responsibility ends with the release of the software and in many cases, teams have little insight into how a version of the software is performing in the field. There are no incentives to look at the data and R&D only looks at post-deployment performance when customer service rings the alarm bells. Of course, in true continuous deployment contexts, the concept of DevOps addresses this, but when releases are less frequent and there are separate release and customer service organizations, R&D teams tend to focus on the next release and its functionality rather than today’s hassles. Especially as it’s certainly more fun, according to most!

Even in the embedded systems world this has to change due to the new context in which we’re building and deploying software. One of the consequences of continuous deployment is that there typically is a rollback mechanism in place as well as mechanisms to track the behaviour of the system. Consequently, it is becoming more and more feasible to push certain testing activities to the post-deployment stage. Testing the software after it has been deployed at customers is a big no-no in many organizations, but it’s a logical consequence of continuous deployment! It is much more cost effective to try new software out in a concrete configuration in the field and rollback in case of issues, than it is to test every configuration in the lab. And with the ability to detect the need to rollback before the user even notices a problem, it does not affect the customer’s perception of system quality!

So, my call to action is to start considering post-deployment testing as an integral part of your testing infrastructure and to make careful decisions about when to test what aspects of the system behaviour. Even if it seems like sacrificing quality, it is in fact an approach to improve quality. Quality is experienced in the field and not in test labs. Consequently, the best place to determine quality is in the field. Don’t be afraid of post-deployment testing; rather embrace it!

In software development external information is more valuable than internal guesses. However people often seem to be more comfortable with the latter, especially on the lower part of the CIVIT model (components, subsystems). Furthermore, what is considered internal and external is relative. My experience is that it helps if people consider each next level to be their customer, instead of everybody focusing on the end customer. This way the information can trickle through the system. The end goal is testing in production but having ecosystem driven testing, where the parts of the ecosystem that are closer to the customer drive the testing of lower levels, can be a good intermediary step.