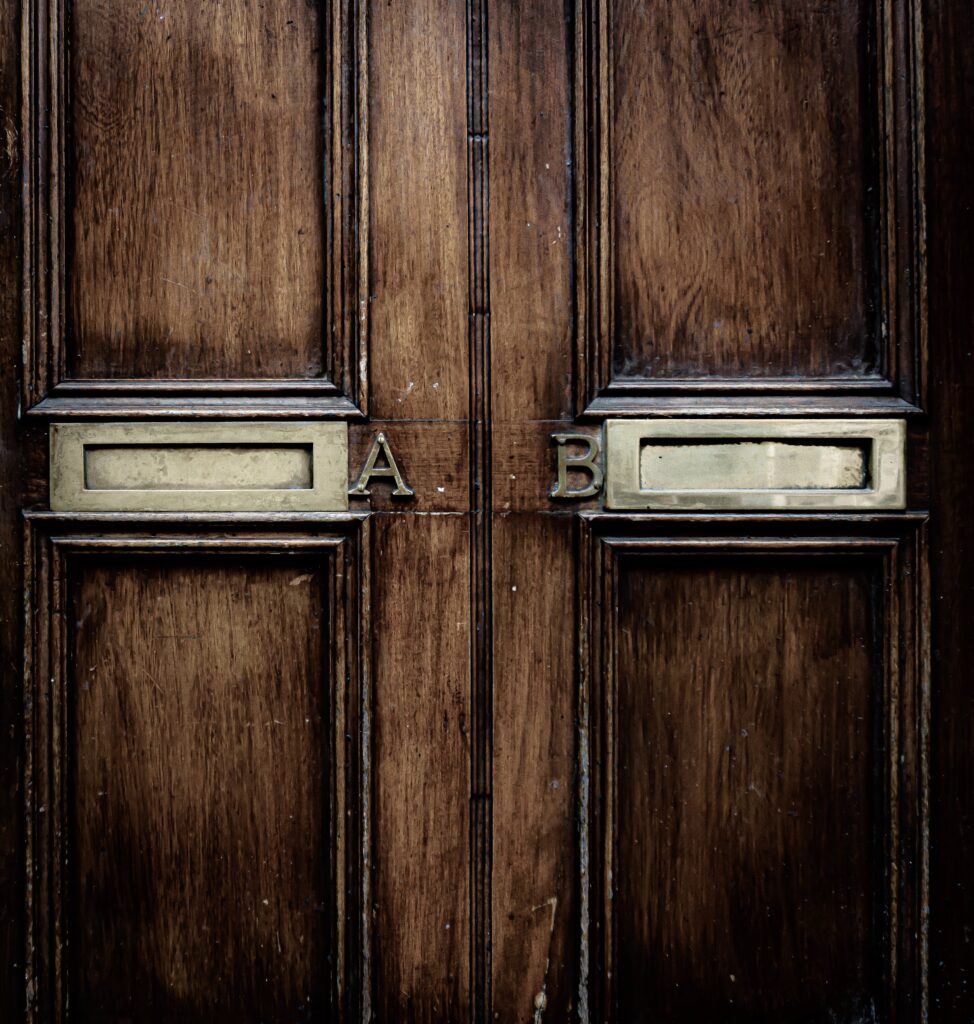

In the online world of software as a service (SaaS), A/B testing in some form is used continuously for virtually any type of development. For an A/B test, two alternative realizations of a feature or some functionality are developed and presented to a test group (alternative B) and a control group (alternative A). Some outcome metric is tracked and measured for both groups, eg conversion, to determine which of the alternatives performs better. There are numerous alternatives and variations available, but the basic notion is that we use data from actual users to determine the preferred alternative to roll out to all users.

When working with companies in the embedded-systems industry and the B2B space, it surprises me to meet lots of people who either are against the use of A/B testing or who believe that it doesn’t apply to them and their industry. The arguments are multiple but generally fall into two main categories: “can’t do it” and “don’t need it.”

The “can’t do it” group has two main subcategories. The first is concerned with collecting and using data from the field. As discussed in outdated belief #6, many companies either don’t collect data from the field at all as there’s a concern about the customer not allowing it, or the collected data can only be used for purposes directly benefiting the customer from whom it’s collected. A/B testing requires data from as many customers as possible, preferably all, to reach the data depth needed for statistical validation.

The second “can’t do it” subcategory is concerned with using customers to experiment on. The nature of A/B experiments is that, generally, one alternative performs better than the other. Logically, that means that the customer group exposed to the inferior alternative has a lower level of performance and customers may complain about getting an inferior solution compared to others even though they’re charged the same. In multi-armed bandit (MAB) algorithms, this is referred to as regret minimization.

The “don’t need it” group also consists of two subgroups. The first is typically found in the B2B space where interaction with customers is often focused on system requirements. This group believes that the customers specify what they want and as long as the system does what the specification says, there’s no need to do anything else.

The second “don’t need it” group tends to consist of hard-core engineers who believe that every problem can be solved by a proper engineering approach where we can reason ourselves to a solution that’s provably optimal and perfectly balances all conflicting forces. If we can mathematically prove that the current solution is optimal, there’s no need for experimentation as we already know the current solution is optimal.

These arguments from the “can’t do it” and “don’t need it” groups are based on several misconceptions. One is that the ownership of data generated by products in the field is something that needs to be addressed through legal means. The automotive industry solved this decades ago in the elaborate contracts that aspiring car owners sign when buying a new vehicle. Every company needs to sort this out and avoid leaving customers and employees in limbo about this.

Second, while it’s of course correct that A/B testing results in one customer group having the inferior solution, this is inherent in the scientific method that has been at the basis of virtually all progress that society has made over the last centuries. If the medical community uses randomized trials (A/B testing) to establish the efficacy of drugs and treatments, patients receiving the inferior alternative evidently suffer, but this is viewed as the price to pay for progress. The same is true for software-intensive systems.

Third, even if you have a lengthy requirement specification (in the defense industry, for instance, it’s not untypical to have well over 10K requirements), the concrete instantiation of each requirement still leaves lots of room for interpretation by the engineers building the system. Rather than assuming that the realization thought up by an engineer in a cubicle on a Friday morning is the only way to go forward, it makes perfect sense to, at least for the most important requirements, experiment with alternatives to achieve the best possible outcome for your customers.

Fourth, the ability of engineers to mathematically calculate the optimal solution applies to a very limited set of cases. In almost all situations, the context in which the system operates will affect the optimality of the solution and the variation in contexts is so vast that it can’t be modeled cost effectively. As a proxy, many engineers resort to optimizing systems for a specific lab setting, but this is of course far from representative of the real world. For all the mathematical work on optimizing combustion in the cylinders of car engines, it’s well known in the automotive industry that virtually any aspect of the car, the driver and the context influences fuel efficiency. The majority of factors are treated as co-variates and not modeled in the mathematical sense.

A/B testing is relevant in any context where a mathematical optimum can’t be established easily. As such, it’s relevant for virtually any company and system. With the increased connectivity of systems and a continuously growing expectation of improvements to systems throughout their entire economic life, it’s clear that A/B testing is a capability that every industry, company and team needs to build up. The rule is very simple: if you don’t know, experiment.

To get more insights earlier, sign up for my newsletter at jan@janbosch.com or follow me on janbosch.com/blog, LinkedIn (linkedin.com/in/janbosch), Medium or Twitter (@JanBosch).