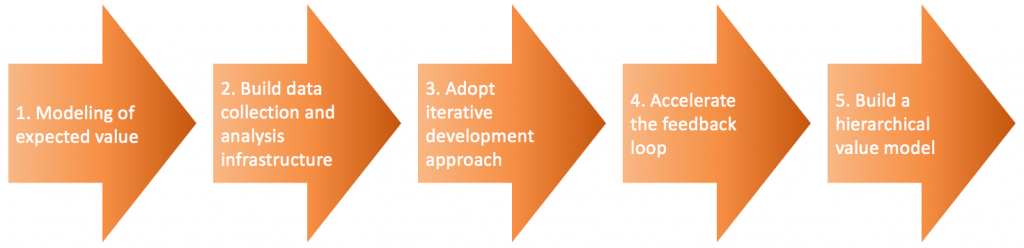

In last week’s blog post, I discussed the first step in adopting data-driven development (see figure below), i.e. modeling feature value in quantitative terms. Once we have described the value that we expect from a feature, the next step is to ensure that we can indeed measure and collect the necessary data in order to determine if the factors that we are looking to influence indeed are influenced by the new feature

Collecting the relevant data requires an infrastructure that receives and stores the data and makes it available for later analysis. There are numerous tools out there that help and support this process. Because of this, the challenges of building the data infrastructure are typically not concerned with the basic plumbing, but rather with aspects related to other aspects such as customer relations, legal constraints, differences of opinion between individuals and functions in the company, cost of data collection and storage, etc.

At this point in the adoption of data-driven development, the focus should be at keeping everything as simple as possible. Hence, we only prepare for collecting data related to the feature at hand from a subset of our system and customer base. We select the customers based on minimizing amount of work to get to actual data collection. This may require selecting certain geographies where laws and regulation are more relaxed or selecting friendly customers that already have a relationship with the company that allows for more access to system and customer behavior

Many companies already have some form of data infrastructure in place, but almost always this infrastructure is concerned with ensuring quality of products in the field at customers. In practice, the data collected by this infrastructure typically turns out to be irrelevant for determining value delivery. So, although the same infrastructure, whole or in part, may be applicable, the kinds of data collected need to be changed.

In practice, companies can use at least two strategies for collecting new types of data. The first one, extensively used in online software but also elsewhere, is continuous deployment. If we deploy new versions of software every agile sprint or even more frequently, of course we can include instrumentation for data collection in the continuously deployed software as well.

The second practice requires a bit more in terms of planning and proactive work. Some companies that I work with have built the capability into the system that allows them to collect data from hundreds or thousands of points. These companies have tried to identify everything that anyone in the company could possibly want to measure. Of course, it is not possible to collect all the data that is possible to collect, so each data collection point is initially turned off, but is part of a configurable super-set of data points. Whenever there is a request in the organization, the relevant data point can be turned on to start the data stream.

When validating whether a feature under development delivers on the modeled, expected outcome, we first collect baseline data, then activate the feature and measure any changes or delta’s in the data that can be attributed to the feature. To increase confidence, one test some companies do is to turn off the feature to measure if the measured factors return to the baseline. Of course, traditional A/B testing tests feature impact in parallel rather than serially. In embedded systems, however, ensuring that the two cohorts are completely comparable is more challenging as the context in which systems operate may be different and hard to compare.

Finally, the data scientists that I work with often lament on the lack of basic understanding that engineers and developers exhibit concerning the collection and analysis of data. This post is too short to dive into the specifics, but it is important to remember that it is extremely easy to draw incorrect conclusions from data. Constant vigilance against incorrect interpretation and using tools and mechanisms to triangulate and ensure that conclusions are well-founded is of critical importance.

Concluding, the second step in adopting data-driven development is the establishment of a data collection and analysis infrastructure. Although numerous tools are available, this still tends to result in numerous challenges pertaining to the user, legal constraints, cost for connectivity and storage and lack of strategic alignment. Nevertheless good solutions exist for data collection and have been proven in practice. The final challenge remains ensuring correct interpretation of the data during the analysis process. This requires that the company sets up the structure of the data in a suitable way and to use tools to triangulate and ensure correct interpretation of data. Enjoy building your data infrastructure!

To get more insights earlier, sign up for my newsletter at jan@janbosch.com or follow me on janbosch.com/blog, LinkedIn (linkedin.com/in/janbosch) or Twitter (@JanBosch).